🍔 Your Takeaways

A New York federal judge ruled in February that conversations with Claude AI are not attorney-client privileged - because Anthropic's own privacy policy allows disclosure to government authorities.

The same week, a Michigan judge ruled the opposite - protecting a litigant's ChatGPT conversations as work product. Jurisdiction now determines your exposure.

The types of data at risk include strategy notes, risk assessments, witness summaries, and anything your team entered independently into a consumer AI tool.

Enterprise AI tools with a signed DPA and no-training covenant provide the protection consumer tools do not - this is the fix.

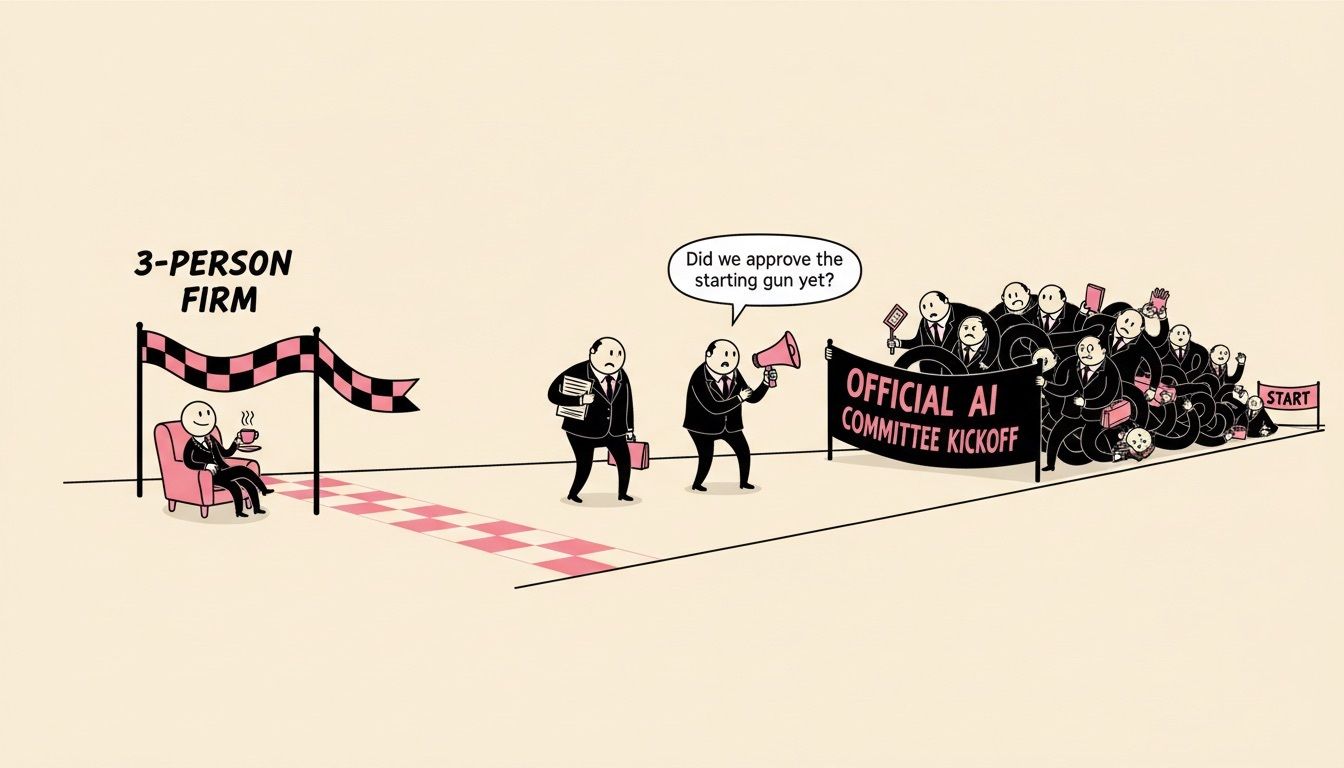

An estimated 59% of firms have no formal AI policy (Wolters Kluwer, 2026). That gap is now a liability.

A managing partner asked me recently whether the AI tools his associates are using are "safe."

I gave him the honest answer: it depends entirely on which version they're logged into.

THE RULING

⚖️ What the Judge Actually Said

In February 2026, U.S. District Judge Jed Rakoff (SDNY) ruled that documents created using Claude AI are not protected by attorney-client privilege.

The case was United States v. Heppner.

The defendant had used Claude to draft defense strategy, summarize witnesses, and assess potential charges - then shared those outputs with his lawyers.

The court denied privilege on three grounds: Claude is not a lawyer; Anthropic's own privacy policy allows disclosure to "governmental regulatory authorities" (destroying any expectation of confidentiality); and the defendant acted independently, not at his attorney's direction.

There is a narrow safe harbor worth knowing.

Judge Rakoff noted the outcome might differ if a lawyer directed the AI use - similar to how a translator or consultant can extend privilege under a Kovel arrangement.

That distinction matters a lot for how your firm documents AI use in litigation matters.

THE SPLIT

🔀 Same Week, Different Court

Here is where it gets complicated.

The same week as Heppner, a Michigan federal magistrate judge ruled the exact opposite.

In Warner v. Gilbarco, Judge Anthony Patti held that a plaintiff's ChatGPT conversations were protected work product.

His reasoning: an AI tool is a "tool," not a third party.

Sharing information with ChatGPT is not the same as sharing it with an adversary.

Under Sixth Circuit law, that distinction matters for waiver analysis.

The result is a circuit split in the making.

Whether your firm's AI conversations are protected or discoverable may now come down to which federal district your case lands in.

Relying on that geography lottery is not a strategy.

What's Actually at Risk

Let me be specific, because "AI conversations might be discoverable" is not a useful warning by itself.

The types of information at risk include: defense strategy outlines, internal risk assessments, witness credibility analysis, summaries of client communications, anticipated opposing arguments, and research queries exploring legal positions.

The pattern Heppner penalized is one that happens every day: a lawyer - or an associate, or a paralegal, or even a client - independently uses a consumer AI tool to draft, analyze, or summarize, then shares that output upstream to counsel.

That independent use breaks the chain.

An estimated 59% of law firms have no formal AI policy (Wolters Kluwer Future Ready Lawyer, 2026).

We compared 8 enterprise AI tools law firms use every day - DPA coverage, training restrictions, and privilege-safety credentials - in one table.

THE FIX

🔐 The Consumer vs. Enterprise Line

The solution is not "stop using AI."

It is "use the right version."

Consumer tools (ChatGPT Free, Claude.ai, Gemini Basic) allow providers to train on your inputs and disclose data to authorities.

Enterprise tools operating under a signed DPA are different.

Harvey, CoCounsel (Thomson Reuters), and Microsoft 365 Copilot enterprise tier all contractually commit to no model training on client data and defined deletion rights.

One important caveat: the enterprise 365 Copilot carries these protections. The consumer version does not.

Same company, different contract, completely different exposure level.

At minimum, a DPA must include a no-training covenant, defined retention terms, government process notice, and a right to deletion.

YOUR RESPONSE

✅ Your Firm's 3-Step Response

Three things - not a 40-page policy document.

1. Audit what your team is actually using. Not what IT approved - what associates are actually logged into. Free consumer tools get used because they're free. This is typically the first thing we surface when we run an AI workflow assessment with a firm.

2. Update your engagement letter. Several major firms have already embedded AI usage clauses into client intake agreements. A brief clause stating only approved enterprise tools will be used for privileged work is sufficient to start.

3. Document counsel direction in litigation matters. The Kovel exception suggests privilege may hold when a lawyer directed the AI use. Any AI use in active litigation preparation should be documented as supervised by counsel. One line in a matter file could be the difference.

Related Legal AI News:

🛠️ 10 Second Explainers - AI Tools & Tech

Relativity AI - An AI-powered e-discovery platform that automatically reviews, categorizes, and flags relevant documents in litigation.

Think of it as a paralegal who can read 50,000 documents overnight and surface only the ones that matter - without billing you for the hours.

Spellbook - An AI contract drafting tool that works inside Microsoft Word.

It's like having a contract specialist in your sidebar who's read millions of legal agreements and can draft or redline a clause in seconds.

Clio Duo - The AI assistant built into Clio's practice management platform.

It handles billing reminders, task tracking, and matter summaries - like an admin who actually understands legal workflow and never misses a deadline.

READER POLL

Has your firm updated its AI usage policy since the Heppner ruling?

A) Yes - updated within the last 30 days

B) We have a policy but it doesn't address this specifically

C) We're working on it now

D) We have no formal AI policy yet

[Reply with your letter choice] - I'll share the results in the next edition.

My Final Take…

This is not a story about stopping AI use.

It is a story about which account your lawyers are logged into.

The line between "exposed" and "protected" is a checkbox in your vendor agreement - and in most cases, the enterprise version of the tool your firm already uses has that checkbox available.

The firms that address this now will be the ones whose AI use holds up when opposing counsel goes looking.

The firms that wait will have a different conversation with their clients.

Hit reply if you want to talk through your firm's current AI tool stack and where the gaps are.

I'm happy to do a quick 20-minute walkthrough.

— Liam Barnes

At Cyberaktive, we help law firms assess, implement, and govern AI tools that actually hold up under scrutiny.

Grab some time to chat

(if you don’t see a suitable time, just shoot me an email [email protected])

How Did We Do?

Your feedback shapes what comes next.

Let us know if this edition hit the mark or missed.

Too vague? Too detailed? Too long? Too Short? Too pink?

Was this week’s newsletter forwarded to you?

Sign up, it’s free.