🍔 Your Takeaways

AI adoption among legal professionals doubled in one year - an estimated 69% now use it at work

54% of firms have provided zero AI training and have no plans to start

Only 9% of firms have a written, actively enforced AI policy

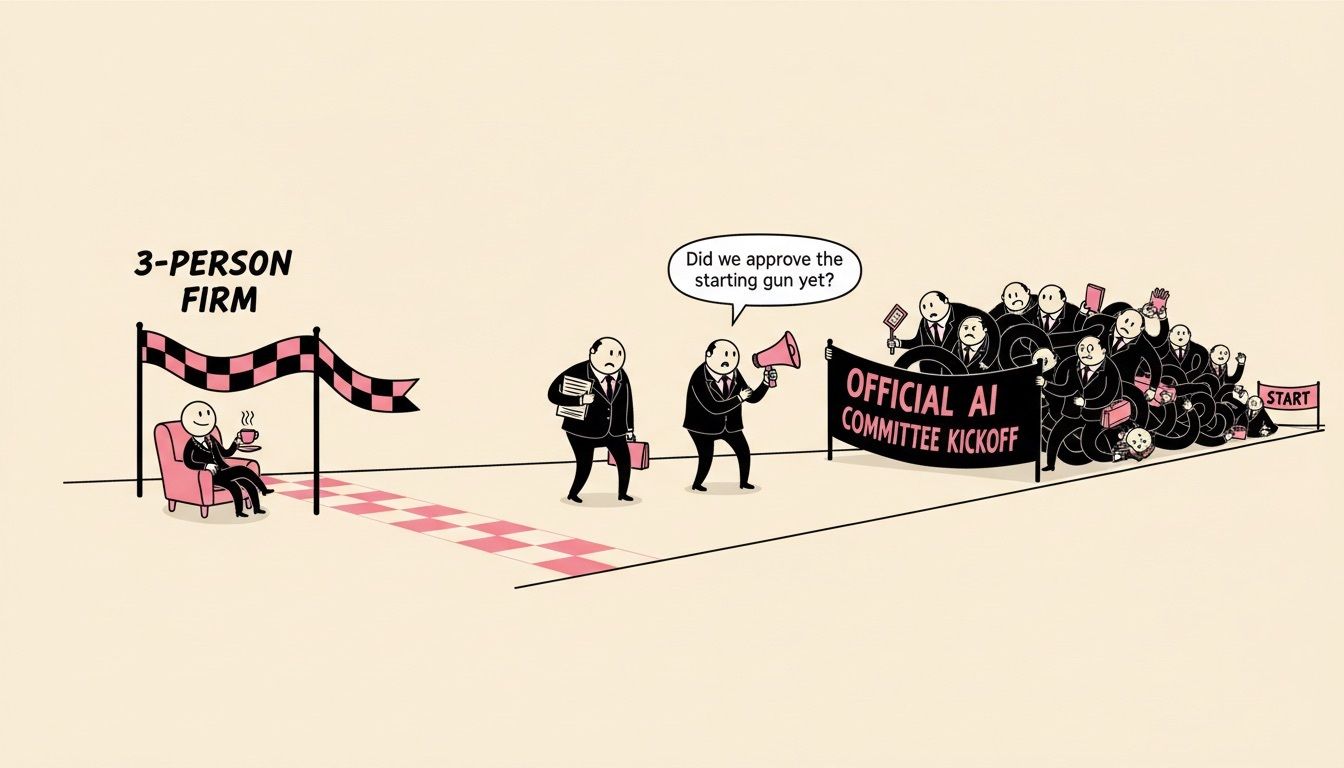

The gap between individual usage and firm governance is where real liability lives

A realistic AI policy takes three steps, not three months

When the 8am Report landed last week, I expected the AI adoption numbers to be high.

I didn't expect them to be this high.

And I really didn't expect the governance numbers to be this low.

That gap is what this edition is about.

THE DATA

📊 What the Numbers Actually Say

The 8am 2026 Legal Industry Report dropped on March 5, and the headline stat is hard to ignore.

An estimated 69% of legal professionals now use generative AI tools at work.

That figure more than doubled in a single year.

As you've probably already noticed at your own firm, this isn't a fringe experiment anymore.

Legal-specific AI usage hit 42%, also doubling from last year.

The top use cases are exactly what you'd expect: drafting correspondence (58%), general research (58%), brainstorming (54%), and summarizing documents (47%).

Here's the part that matters for the conversation we're about to have: 94% of users report measurable benefits.

Time savings.

Improved quality.

This isn't going away.

Your team is already using it, and most of them find it genuinely useful.

The question isn't whether AI belongs at your firm.

It's already there.

THE GAP

⚠️ Where the Risk Actually Lives

Here's where the 8am data gets uncomfortable.

54% of firms have provided zero training on responsible AI use - and have no plans to.

43% have no formal AI policy governing how their lawyers use these tools - and no plans to create one.

Only 9% have a written, actively enforced policy.

Let that settle for a moment.

An estimated seven out of ten lawyers at your firm are using AI tools every day, and the odds are that your firm has no guardrails around any of it.

This isn't just an IT concern.

Over 700 court cases worldwide now involve AI hallucinations.

State bars have begun initiating disciplinary action for improper AI use - specifically, using public AI tools on client work without human verification.

Look, as I see it, running a firm where most of your lawyers use a tool with zero oversight is like operating without a conflict check system.

Everything is fine - until it isn't.

And when it isn't, it's a partnership liability problem.

Not an associate problem.

Not a tech problem.

A partnership problem.

🔧 FREE TOOL

AI Policy Template Generator

Build a customized AI governance policy for your firm in 3 minutes.

Answer 7 questions about your firm's size, practice areas, and risk tolerance - get a complete, ready-to-implement AI policy document.

No generic templates.

No legal jargon walls.

Just a practical policy your partners can review this week.

THE FIX

🛠️ Three Steps That Actually Work

The good news is that closing this gap isn't as complicated as it sounds.

Depending on your firm's size and internal bandwidth, it might make sense to have an external AI legal consultant work through this with you.

But whether you bring in outside help or take ownership of the process internally, here are the three things that should be happening.

Step 1: Audit what your team is actually using.

Not what you think they're using - what they're actually using.

Survey your lawyers anonymously.

The gap between assumed and actual tool usage is consistently the biggest surprise.

Step 2: Write a realistic policy.

Not a ban.

Bans don't work.

They just drive AI usage underground, which makes the risk worse.

The North Carolina Bar Association published a useful guide on this exact point: "Beyond the Ban - Why Your Firm Needs a Realistic AI Policy in 2026."

A good policy covers three things: approved tools, required verification steps, and what never goes into a public AI system.

That's it.

Step 3: Implement structured training.

Even two hours of training gets your firm ahead of 54% of the profession.

Focus on two skills: writing effective prompts for legal work, and verifying AI output before it goes anywhere near a client.

You don't need a six-month rollout.

You need a Tuesday afternoon and a room.

Related Legal AI News:

HFW Appoints First Head of Legal Technology Adoption - Artificial Lawyer

8am 2026 Report: AI Adoption Among Legal Professionals Doubles in One Year - LawNext

Legalweek 2026: DISCO, Epiq, and Advocacy Roll Out Major AI Platforms - XIRA

🛠️ 10 Second Explainers - AI Tools & Tech

Advocacy - A context-driven litigation platform that just emerged from stealth with $3.5M in seed funding.

Monjur Pilot - An AI-powered contracting platform designed for non-legal professionals who still need to handle contracts.

Epiq AI - Epiq expanded its AI capabilities with agentic workflows for eDiscovery, claiming fact research up to 45x faster and over 80% automated review.

READER POLL

What's stopping your firm from implementing an AI policy?

A) Don't know where to start

B) Partners can't agree on scope

C) We're waiting to see what other firms do

D) We already have one (tell us about it)

Reply with your letter choice - I'll share results next week.

[Reply with your letter choice] - I'll share the results in the next edition.

My Final Take…

The firms that write their AI policy this quarter will be the ones that scale AI safely next year.

The ones that wait will be writing their policy in response to a bar complaint or a client incident.

That's not a scare tactic - it's just how governance tends to work in legal.

You don't need a perfect policy.

You need a real one.

Hit reply and tell me what your firm's approach looks like - I read every response.

— Liam Barnes

If your firm needs help mapping AI usage, building a governance framework, or training your team on responsible AI workflows, that's exactly what we do.

Grab some time to chat

(if you don’t see a suitable time, just shoot me an email [email protected])

How Did We Do?

Your feedback shapes what comes next.

Let us know if this edition hit the mark or missed.

Too vague? Too detailed? Too long? Too Short? Too pink?

Was this week’s newsletter forwarded to you?

Sign up, it’s free.